The Metric System: From the Wonderful Folks Who Brought Us the Guillotine

I’m going to rant about the Metric System now. It’s something that’s been gnawing at me for years. What’s this got to do with marketing? you might well ask. Well, nothing. Or rather, adhering to the 9th Unbreakable Rule of Marketing, that everything is marketing, including the Metric System, everything. Also it just bugs me.

I could just toss this argument off with the circulating joke that says that the world is divided into two types of countries: those on the metric system and the country that walked on the moon. But that would be too glib. Also, kiljoys would note that Myanmar and Liberia are also holdouts, and neither of them, to my knowledge, has walked on the moon.

There is actually a logical reason why the system the United States uses (as well as most of the Anglo world in everyday practice) makes more sense than the metric system. The latter, as I will show, is based on something incredibly unintuitive, nerdy, and snobby. At least in linear measurement.

The origins of the Metric System go way way back to 1799, when it originated in Republican France just after they had sated themselves with their orgy of hacking off the heads of tens of thousands of innocent people and had just started their program of world conquest under the new dictator, Napoleon. Napoleon, in fact, heartily endorsed the metric system because he was bad at math and liked the idea that he could use his fingers to count stuff.

Tired of so many different standards of measurement throughout Europe (not to mention the rest of the world, but they didn’t matter then), the French reasoned (and they considered themselves the paragons of reason) that a single, standard, interconnected system of base-ten measurements (since humans have ten fingers) was the most rational. This they proceeded to impose on all the other countries they conquered–as well as the Code Napoleon, croissants, and Jerry Lewis. The British, whom they never conquered, told them to stuff it. And the Americans didn’t care what the French were doing.

Ten hour days. That’s gonna work.

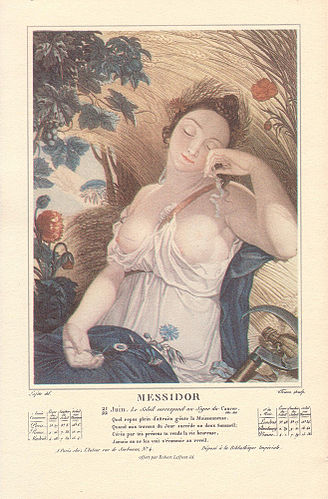

The first thing that the French Revolutionaries tried to decimalize was time. In 1793 they divided the day into 10 hours (each of a hundred minutes) and weeks into 10 days (décades–yeah, yeah, I know). Clockmakers were frantic. But the months they left at twelve (changing the names of them to conform to more rational connotations like Thermidor and Fructidor) and each had exactly 30 days divided evenly into three ten-day “weeks”. But that left five days leftover at the end of the year. So the system was already running into problems due to uncooperative Mother Nature. The decimal system just didn’t apply naturally to time on this planet. The earth, vexingly, takes 365.25 days to revolve around the sun and isn’t neatly divided by 10, or even 30. The Creator was not sufficiently revolutionary, evidently. So by 1805, when Napoleon made himself Emperor, he abandoned decimal time and told everybody to go back to the old system. And Frucitidor went back to being just August.

Not so other measurements.

But what could be more logical than the meter…or metre?

All the Enlightenment people liked the idea of a length based on some rational, decimal standard. At first the meter (or metre, to be French about it) was supposed to be one-ten millionth of the distance from the equator to the pole. Now there’s a concept everyone can feel. I mean who hasn’t walked from the Amazon to the North Pole and counted to ten million? When, sometime in the middle of the twentieth century, they found out that they didn’t have that distance as accurate as they thought, they changed the definition of the meter to the distance of 1,650,763.73 wavelengths of the orange-red emission line of the element krypton-86 in a vacuum (in honor of Superman, I imagine). Can you feel that? It’s so logical. It’s yea big.You know, a meter. Like a yard, only better.

Finally, somebody had an even more natural standard for the meter, it was the distance light traveled in a vacuum in 1/288,792,458th of a second. Yeah! That makes so much sense now! It’s so…uh…visceral. You turn on the flashlight and I’ll use my iPhone stopwatch, set to 1/288,792,458th of a second. Go!

The meter was supposed to replace the ancient and unenlightened yard, which was roughly the distance from the tip of a grown person’s nose to the tip of their outstretched fingers.The meter was supposed to be about a yard, but more scientifically derived. The yard was just not precise. Not scientific. But it was pretty easy to visualize. A meter is, by contrast, roughly the distance from your finger tips, past your nose and halfway (not quite) to somewhere between your nose and your shoulder. Now given the wide variety of human physiques, this old measurement of a yard is mighty crude. But there is a standard yard somewhere (like in at the Royal Observatory at Greenwich, England, for instance). So it’s been regulated for a couple centuries. And it’s defined to three feet or 36 inches for ease of computation. It’s a very easy measurement to work with.

The other old measurements of length–the inch, the foot, the mile–also have origins in human physiology and experience. An inch is about the width of a grown person’s thumb, for instance. A foot, roughly the length of a man’s foot (size 11). During the sixteenth century a village would take sixteen random men, measure their left feet, take the average,and that would be the village’s standard foot. But even that became standardized throughout the British empire. I even miss the old cubit, yessir, the distance from your elbow to your middle fingertip. Eighteen inches (half a yard). Couldn’t have built this here ark without it.

What have the Romans ever done for us?

And a mile? This is the best one. A mile comes from the Latin, mille passus, or 1,000 paces. Back when most people walked everywhere, this made perfect sense. As a Roman legion went on a march, it would designate one poor sap (presumably as administrative punishment, like peeling potatoes) to count off 1,000 paces of his right foot hitting the ground. He was like a human odometer. And since an average grown man’s pace was a little over 2.6 feet, counting for a thousand times when your right foot comes down means you’ve walked a mile, or 5,280 feet (1,760 yards).

I’ve actually tried this and measured it against my sophisticated, digital, geo-calibrated pedometer on hikes and found this method was 99% accurate (even though I’m slightly taller than the average Roman soldier). Another convenient thing about the mile is that at normal walking speed, it takes just about twenty minutes to walk one, since we average 3 mph. You can’t do that with a kilometer. You walk somewhere between 4-5 kph so, that means. it takes..well, you figure it out.

So, see? At least for distances and measures of length, the old inch-foot-yard-mile system is far more intuitive and human than the meter. Of course the American military has gone over to metric system, mostly as a concession to our sensitive allies in NATO. Where a “klick” is military jargon for a kilometer (real Americans can’t bring themselves to say “kilometer”). And the U.S. scientific community seems to talk in meters and kilometers and nanometers; that is, until they get to really big measurements like AUs (astronomical units, the radius of the earth’s orbit), light years and parsecs. None of these are tied to the metric system.

And yet we still use the ancient Sumerian sexigesimal (base 60) system for radial and time measurement; 360° in a circle, 24 hours in a day, 60 minutes in both an hour and a degree, 12 months in a year, and so on. Nobody seems to want to make that metric.

“A pint’s a pound the world around.”

Of course with measures of liquid and volumes, both systems are logical. A pint of water (or beer) weighs a pound (“A pint’s a pound the world around.”) and a liter of water (or chablis) is a kilogram (come on, you know the mnemonic jingle, “A liter’s a kilogram in every place but the United States …and English speaking countries, Burma, and Liberia…but that’s it.”). So it’s hard to argue one over the other in terms of reason. And the volume of a liter is 1000 cubic centimeters. How convenient. Everything in a dec-chauvinist neat little package.

But I have become accustomed to how much a pound feels from enjoying a pint in a pub. A liter of beer is a little much. I don’t know the heft of a liter pitcher. And I also think the idea of the liter is a marketing trick on the part of Europeans to make you think you’re not paying as much for gas.

My forehead’s a balmy 310° Kelvin.

When it comes to temperature, it also seems perfectly logical that zero degrees C should be the freezing point of water (vs 32° F) and 100° for its boiling point (vs 212° F). The only trouble I have is probably that I’m just used to Fahrenheit over Celsius (or Kelvin, in which absolute zero, the most logical of all starting points, is in fact zero, not −273.15° C or −459.67° F). But I am used to my normal, non-feverish body temperature being 98.6° F, not 37° C (that just seems too cold) or 310° K (too hot). I like it just right. Like Goldi-whatsername.

But that’s just me. In fact, I’m fine with people who want to use the metric system. It’s just that Americans who do are generally such self-righteous pricks about it, wanting to force it on everybody, like gluten-free cookies. They are so committed to the intuitive feel of how far light travels in 1/288,792,458th of a second (in a vacuum) that they can’t see any other way. To which I’d say I’m committed to how far light travels in 1/315,823,432nd of a second, i.e. a yard.

So I guess that my preference is for the natural and human scales measured by inches, feet, yards and miles. And the pound of a pint of bitters in an English pub. However, I’ll give up rods (5.5 yards), furlongs (220 yards or half a high school track), leagues (3 miles or how far you can walk in an hour), and toises (6 feet).

See? Didn’t you learn something? In spite of yourself?

And I didn’t bring up marketing. Well…hardly at all.